(Ab)using General Search Algorithms on Dynamic Optimization Problems

In retrospect, my most ambitious blog yet. As it goes, I was reading “Artificial Intelligence. A Modern Approach” the other day. In one of the earlier chapters the authors discuss general search algorithms: breadth-first search, depth-first search, uniform-cost search (Dijkstra), and variations of those. A bit later they also cover Monte Carlo tree search as a way of finding approximate solutions in big state spaces. Myself, I haven’t played a lot with general search algorithms but I have played a lot with dynamic optimization problems, back when I did my PhD in economics. Dynamic optimization problems are a subclass of general search problems, with some extra restrictions on the nature of the state space and the goal function. But, being a subclass of general search problems, they are still about finding optimal paths in state spaces, and so general search algorithms can be applied to them. And I was wondering, how would these more general algorithms differ from the methods I’m used to, namely Bellman’s optimality principle and Pontryagin’s maximum principle? For they all seem to be doing more or less the same thing...

So, here is my plan for the blog. I’ll formulate a dynamic optimization problem. It’ll be a toy problem, which can be easily solved analytically in less time than it takes to program any of the numerical solutions. Then I’ll cover, in turn, Bellman’s principle, Dijkstra’s algorithm, a Monte Carlo tree search, and Pontryagin’s maximum principle as means of solving the posted problem numerically. For each algorithm I’ll give a quick refresher on how it works and, fingers crossed, will supply animations to show how it searches the state space.

A remark is in order. Specialized algorithms, if applicable, are typically more efficient than general algorithms. Say, we wouldn’t expect Dijkstra’s algorithm to perform better on a dynamic optimization problem than Bellman’s principle does. And indeed it is much slower and uses more memory. Such misplaced comparisons are not the point of the blog. (Though I’ll give some benchmarks anyhow because I like making silly benchmarks. Who doesn’t?) The point is rather to contemplate the various ways of traversing a state space in search of the optimum. And to make cool animations, of course.

The toy problem is as follows. Let denote time, let denote a state variable with , and let denote a control variable. The control variable need not be continuous in . For the equation of motion we take . We are asked to solve the following optimization problem:

The problem is borrowed from “Optimization: Insights and Applications” by Jan Brinkhuis and Vladimir Tikhomirov (Example 12.2.6, p. 489).

For only Chuck Norris can enumerate a continuum of state spaces, I’ll be considering a discretized version of the problem. I’ll split the time interval into steps and the control variable interval into steps. For a quick but visual refresher on how algorithms work I’ll use a coarse dicretization with and . And for obtaining approximate solutions, as well as for the accompanying animations, I’ll use a much finer discretization with and . The coarse discretization looks as follows, where ▢ denotes a state and → denotes an action, i.e. a specific choice of the control variable.

Here, the actions at the terminal nodes are omitted and in the upcoming calculations I set at the terminal nodes. It’s an arbitrary choice which has a negligible impact on the solution if the discretization is fine enough.

Bellman’s Principle

The original problem asks us to find the optimal solution starting at state A. Suppose we knew the optimal solutions if we were to start at states B, C, or D. Then finding the optimal solution at A would be straightforward: we try , and , and choose the action that minimizes the sum of the payoff at that moment and what we get from the consequent optimal solution. The same idea applies to finding solutions at B, C, or D, and so on. Then, having fixed at the terminal points, we can start at the end and compute the optimal solution backwards (tap to single step, double tap to resume animation):

Here, is defined as the optimal continuation value if we start with , i.e.

Or, to be precise, a discretized analogue of that but let me skip over the detail.

A note on colours, or the algorithm in brief:

Blue shows visited states and has nothing to do with the democrats.

For each unvisited state we consider all the possible actions in that state, highlighted in orange, and choose the action that minimizes the current payoff, i.e. , plus the continuation value from following that action. The chosen action is highlighted in purple.

When is reached, the sequence of optimal actions that starts at the origin gives the solution.

When viewed broadly, Bellman’s principle can be applied to any problem with an optimal substructure. That is, whenever we can solve a problem by first solving the constituent subproblems, we can view such an approach as an application of Bellman’s principle. And examples abound in everyday life.

If I’m making a stew, I focus on the optimal combination of mirepoix, protein and booze, effectively assuming that I will prepare an optimal mirepoix to start with. And yes, my friends might question if my mirepoix is all that optimal and a chef might question the assumption that the optimality of the mirepoix is independent of the protein and booze that get added to it later. But setting those concerns aside, making a stew uses Bellman’s principle.

The coarse discretization gives , which is a poor approximation to the analytical solution given by . Switching to the fine discretization with and gives . That looks better. The fine discretization also presents an opportunity to partake in the internet culture with one’s own animated gif. (Well, technically it’s an apng but it’s short and it loops, so I call it a gif.)

Blue shows the part of the state space that has been explored so far, with orange indicating the states visited most recently. Purple highlights some of the optimal paths leading to the right boundary. Finally, dark green shows the optimal path that solves the problem, namely the one that starts at

Dijkstra’s Algorithm

Bellman’s principle can be seen as a breadth-first search that starts at the terminal nodes (). Alternatively, we can do a breadth-first search that starts at the initial node (, ). In either case the whole state space needs to be explored to arrive at the optimal solution. In contrast, the idea of Dijkstra’s algorithm, also known as a uniform-cost search in the AI literature, is to explore the most promising directions first, potentially finding an optimal solution long before the whole state space gets visited.

Dijkstra’s algorithm is formulated in terms of costs, and the proof of its optimality relies on the assumption that the costs are non-negative. To apply Dijkstra’s algorithm to the stated problem we need to ensure that the expression under the integral is non-negative. We can do so by considering a modified optimization problem,

Adding a constant doesn’t change the optimal control path . And, given that , we have for whatever we choose. So, and we can run Dijkstra’s algorithm (tap to single step, double tap to resume animation):

Here, gives accrued costs, i.e.

A purple highlighting indicates optimal costs, that is, . The costs elsewhere need not be optimal and are subject to revision during the run of the algorithm (as bad luck has it, such cases don’t happen in the diagram above).

A note on colours, or the algorithm in brief:

Blue states are visited states. Blue arrows show candidate paths that minimize the costs needed to reach those states. These paths are the best discovered so far and if a better path is found the arrows will be updated.

At each stage of the algorithm when all the states are coloured blue we select the state with the smallest accrued costs among those that can still be explored. The selected state is highlighted in purple. It can be shown that the blue arrow leading to the selected state gives in fact the minimum possible costs. Such optimal actions (arrows) get highlighted in purple.

Once a new state is selected for exploration, we visit all of its children and compute the costs required to reach them. This operation is shown in orange. If a child was previously visited, we compare the new route (orange arrow) with the route previously found to be the best (blue arrow) and select the one yielding lower costs.

Once any of the optimal paths reaches the right boundary, we’re done.

Once we subtract back the added constant we get the same solution as before. Phew... Now on to the finer mesh.

As with Bellman, blue indicates the visited part of the state space, orange is the most recent visits, purple shows some of the optimal paths, and dark green highlights the optimal path that is the solution to the problem. As hoped, the green path here is the same as the green path in the animation of Bellman’s principle. The purple paths are different, of course. With Bellman, a purple path indicates an optimal traversal starting from a fixed point on the right boundary, and with Dijkstra a purple path indicates an optimal traversal starting from the origin.

We clearly see that Dijkstra’s algorithm ends up visiting only some of the state space. On some problems this saving could be substantial, especially if a good admissible heuristic can be chosen (with an heuristic, it becomes the A* algorithm). On this problem, not so much, about half. At the same time, the algorithm is more complex than Bellman’s principle, and we’ll see from the benchmarks later on that on this specific problem the complexity outweighs the benefit of not needing to explore the whole state space.

Monte Carlo Tree Search

MCTS can play go much better than I do, so how difficult can it be to use MCTS to solve a dynamic optimization problem? Motivated by this slightly nonsensical logic, I gave MCTS a spin. The answer is, rather more difficult than I initially anticipated. In comparison, barring a few trivial to debug mistakes, I’ve programmed Bellman’s method and Pontryagin’s maximum principle in one go and each within a day. Implementing Dijkstra’s algorithm was also straightforward albeit took more effort as this commit reveals:

Whereas, as of this writing, my MCTS saga is still ongoing. Were I to believe in bad omens, I could have seen my struggles from the get-go because the first time I ran my implementation I got a segmentation fault. (Caused by node->size == 0; where node->size = 0; was meant to be.) Then there was a mistake in the implementation of the random policy. Then there was a mistake in the back-propagation code. Then I could not find any more mistakes using gdb, so I had to program an ncurses visualization to see what was going on:

The visualization revealed a bunch of logical mistakes and having addressed those I got my first correct digit. That is, I got -4.55 with the right answer being -4.67. It also took about 14 min to compute that answer, which is probably longer than it would take to solve the problem on paper. The crux here is well known but at times blissfully forgotten when people get excited about stochastic methods, Bayesian estimators included. Stochastic methods are provably correct if they can explore the whole state space. Yet the whole point is that the state space is usually way too large to explore fully, so we bargain correctness for feasibility and explore only a small part of the state space. That bargain is very difficult to get right. What it means in practice is that there are any number of ways to implement an MCTS, some much better suited for some problems than for others. Then there are the metaparameters. So, what am I spending my time on now? Well, quickly programming the golden-section search to optimize the metaparmeters, and then just trying out various modifications to MCTS and keeping score which one does better.

Many days and 13 versions later, possibly missing a zombie outbreak in the process, my MCTS implementation finally works on this problem. One of those, if I knew I wouldn’t have started... Anyhow, let’s shallow dive into MCTS (tap to single step, double tap to resume animation).

Here, is defined as our best guess at the optimal continuation value if we start with . As more paths are sampled, these values are improved and hopefully converge to their true values in the neighbourhood of the optimal path. In the example above the convergence is not achieved after 7 iterations and we end up with a sub-optimal solution of .

This time around the colour notation is a tad different.

Blue states indicate how far the tree has grown.

To grow a tree, a path is drawn from left to right. While the path traverses the existing tree, it must balance exploration and exploitation. When exploring, the next state is chosen randomly and is highlighted in orange. When exploiting, the next state is chosen so as to (in our case) minimize the payoff from the current action plus the corresponding continuation value. Exploitation choices are highlighted in purple. (Happens but once in this example.) After the path reaches the boundary of the existing tree, it continues randomly. The first state on that random continuation is highlighted in orange in the diagram, as it gets added to the tree, and the remaining states are highlighted in grey.

Once the path is drawn, the continuation values along the path are back-propagated from right to left. If the new is better than the old one, the new one is chosen, otherwise the old one is kept. For those familiar with MCTS, this part is different from what would typically be a gradual update rule but seems to work substantially better on this particular problem.

The grey part of the path is discarded when no longer needed. Then a new path is drawn, the values are again updated, and so on.

Save for the artsy bits—the choice of colours, how many optimal paths to draw, how fast to animate fade-outs—I could visualize in advance how the animations for Bellman’s principle and Dijkstra’s algorithm were going to pan out. They were certainly fun to make but maybe a bit less fun to watch. Like watching a series after reading the book. Not so for MCTS. I’m probably more curious myself at this point that any reader that has gotten this far. (Though, if no reader has gotten this far, the comparison is not well defined...) So, let’s see.

Blue shows how far the tree has grown. (It has grown far for it needs to reach the right boundary, obviously, but it has not grown wide, which is... Surprising? Not surprising?) Orange shows recently visited states, which in the case of MCTS are paths drawn from the origin to the right boundary. The heads of these paths are used to build the tree. The tails, as discussed earlier, are discarded. Dark green shows the final optimal path.

Pontryagin’s Maximum Principle

None of the algorithms discussed above, with the possible exception being Bellman’s principle, would be an efficient choice to solve the stated problem. The point rather being, all these general algorithms can be applied to the stated problem, and the problem is trivially embeddable in , and that allows to visually compare how these algorithms work. If, on the other hand, we were actually interested in solving this specific problem, we’d apply a specific algorithm best suited here, which would be Pontryagin’s maximum principle. Not coincidentally, it’s also the algorithm used by Brinkhuis and Tikhomirov’s textbook, from which I’ve lifted the problem.

To prevent this blog from becoming a textbook in its own right, I must skip over pretty much every detail here. Let me just give a very brief intuition. The original problem, i.e. before the discretization, is smooth. In a smooth problem, if we zoom in far enough, everything becomes linear (or, to be precise, piecewise linear as need not be continuous). That means that in a small local neighbourhood we can compute analytically what the optimal control should be. Piecing together these local solutions gives a differential equation that the global optimal solution must follow. There will be multiple candidate paths that satisfy the differential equations and these paths are actually one and the same as the purple paths in the animation of Dijkstra’s algorithm. As for the optimal path, it must additionally satisfy the so-called transversality conditions. Cutting to the chase, instead of exploring the whole state space, we simply try some candidate paths and look for the one that satisfies the transversality conditions.

It is really the smooth nature of the problem that imposes a lot of structure on it, which effectively makes the problem a lower-dimensional one. Consequently, Pontryagin’s maximum principle solves the problem fast. When discretized, that extra structure is lost, and the problem becomes harder. That’s why all the previous algorithms I’ve discussed are suboptimal on this problem.

In textbooks, Pontryagin’s principle is only used on problems that can be solved analytically but that’s a textbook’s restriction, not the method’s restriction. Pontryagin’s principle can also be run numerically and so applied to any sufficiently smooth dynamic optimization problem. That’s what I’ve done here as well. Not because it’s necessary, but because visualizing an analytical solution would be rather boring. The coarse grid won’t help in explaining anything here, so I will immediately go to the fine grid (where , effectively meaning that I’m solving the respective differential equation using ).

I’d like to share an opinion at this point. Pontryagin’s maximum principle and Monte Carlo tree search have two points in common. One is silly, they both search for the solution by drawing candidate paths as the animations so nicely attest to. Another one is something to ponder, for both algorithms rely on the smoothness of the problem to some extent. This smoothness requirement is explicit in Pontryagin’s maximum principle. As for Monte Carlo tree search, while one can run it on any problem, if there are pockets in the state space where the problem behaves very differently, the partially random search might miss those pockets and the resulting outcome might be quite rubbish. Contemporary MCTS applications use neural networks to approximate value functions. A number of explanations can be offered why that really helps with some problems, and one possible explanation, IMHO, is that a suitably chosen neural network makes the problem locally more smooth thus mitigating the aforementioned difficulty.

And this is it. In place of a conclusion, on to

Silly Benchmarks

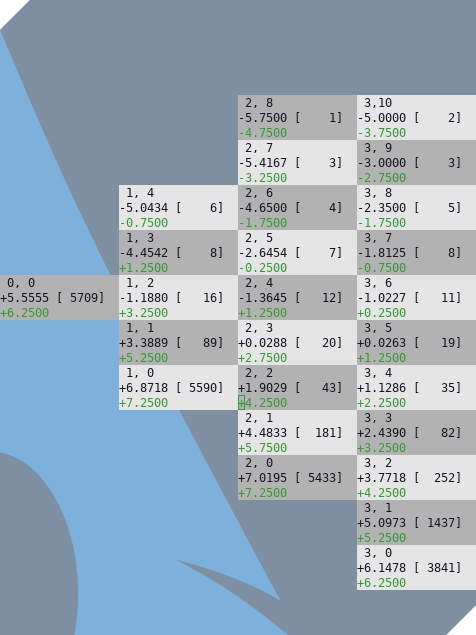

These benchmarks are silly because they are incomplete and thus misleading. After all, they are for this specific problem only and can hardly be generalized. And if Dijkstra’s algorithm and MCTS were always inferior to, say Bellman’s principle, nobody would be using them. The benchmarks are also silly because the implementations I’ve written are single-threaded. In practice, all these algorithms can be parallelized with a varying degree of benefit, and many elements can be parallelized on a GPU, so the practically relevant numbers will be different. Finally, these are not peer-reviewed, practically used, well-debugged implementations. Just something I wrote the other day. So, having done the disclaimers, here are the numbers. (The programs are in C, the source code is available on gitlab, the column names in the table below link to the respective source files; the benchmarks have been run on AMD Ryzen 3600.)

| Bellman | Dijkstra | MCTS | Pontryagin | |

|---|---|---|---|---|

| Running time | 0.05 s | 0.63 s | 7.07 s | 0.00 s |

| Dynamic memory | 4 MB | 54 MB | 3 MB | 0 MB |

| Visited states | 100% | 59% | 74% | 0.2% |

| Visits / state | 22 | 22 | 395 | 4 |

| Stored states | 100% | 59% | 5% | 0% |

| Mem / state | 4 B | 88 B | 58 B | NA |

| L1 misses / visit | 0.0004 | 2.7 | 2.0 | 0.4 |

The timing for MCTS excludes metaparameter optimization. Visited states are all the states that were visited during the computation and stored states are those states that needed to be stored in memory, in one form or another. Visits / state gives the average number of visits per visited state, and mem / state gives the average dynamic memory per stored state. L1 misses / visit, which any reader can safely ignore, and which is used to make an argument a few paragraphs down the line, gives the average count of L1 cache misses per state visit.

Pontryagin’s maximum principle solves the original smooth problem, and we see that the extra structure that that smoothness imposes helps a lot. The other three algorithms solve the harder discretized problem.

While Dijkstra’s algorithm has less complexity in terms of the number of state visits than Bellman’s principle—same visits per state but fewer states visited—it uses a hash table, which is an expensive data structure. It also uses a priority queue and it needs to maintain a collection of optimal paths. All that makes Dijkstra’s algorithm slower and less memory efficient on this problem.

Why is a hash table necessary? It’s a catch-22. The potential memory-saving advantage of Dijkstra’s algorithm and MCTS is that they store only a fraction of the state space in memory. However, since we do not know in advance which states those are, and we need to be able to access them randomly, a hash table is required to store them. On this particular problem the overhead of a hash table outweighs the benefits of not needing to store the whole state space in memory.

(The reason a hash table is much slower than a plain array on this problem is subtle. It has in fact less to do with the extra processing instructions and more to do with processor caches. After all, a good hash function is a function that avoids collisions. As a direct consequence, the states that are close to one another in the state space will be nowhere near one another in memory, when stored in a hash table. So, as either Dijkstra’s algorithm or MCTS traverse the state space, every single state is more likely to be fetched from the L2 or L3 cache and not from the L1 cache. Whereas with Bellman, the states are stored sequentially in one big array, and given the algorithm mostly considers the states that are next to one another—when choosing the optimal actions—these states are mostly fetched from the L1 cache.)

As for MCTS, it turns out to be more memory efficient than Bellman’s principle but it is also much slower because it has a higher complexity in terms of state visits. Can that complexity be further reduced? I’m sure it can be. I’m also sure I’m not doing that for I had my fill with MCTS for this month.